Catena is now Pearl Talent! Same mission, new name.

Understanding AI Adoption and How to Create Real Value

AI adoption has crossed the “early adopter” phase. It is already part of day-to-day work in many companies, even when there is no formal rollout plan. People use it to draft emails, summarize meetings, answer customer questions, write code, and speed up research.

What is changing in 2025 is not whether AI is being used. It is how uneven the progress looks once you try to scale it.

McKinsey’s 2025 global survey shows 88% of respondents report regular AI use in at least one business function, up from 78% a year earlier. At the same time, nearly two-thirds say their organizations have not yet begun scaling AI across the enterprise.

So adoption is mainstream, yet enterprise-wide value is still hard to capture.

This blog breaks down what AI adoption looks like right now, why scaling is the real bottleneck, what is happening with AI agents, and what practical moves help organizations go from “pilot” to “profit.”

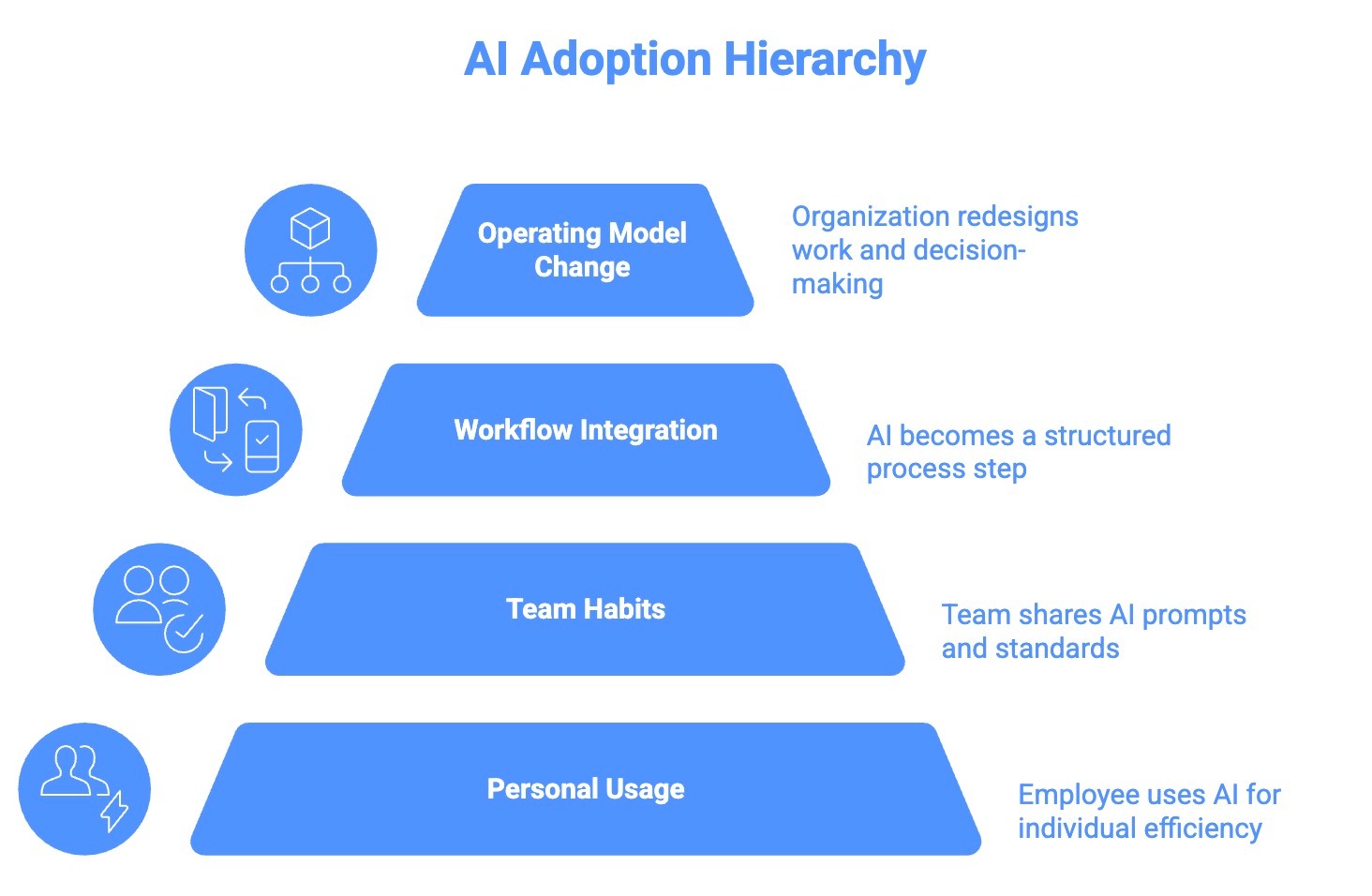

What Counts as AI Adoption

“AI adoption” gets used as a catch-all phrase. In practice, it usually means one of these:

- Personal usage: An employee uses AI to move faster in their own work

- Team habits: A team starts sharing prompts, examples, and standards. Usage becomes repeatable, and quality improves.

- Workflow integration: AI becomes part of the process. There is a clear “AI step” with inputs, checks, and ownership.

- Operating model change: The organization redesigns how work flows, how decisions are made, and who owns what. Adoption becomes part of the system.

If you track “AI adoption” with login counts alone, you mostly measure the first two layers. The impact shows up when you reach the last two.

Why Usage Goes Up While Impact Stays Flat

Three patterns explain the adoption puzzle in plain terms.

1) AI stays in side quests

Teams begin experimenting with AI in safe, low-risk ways. They use it to draft emails, summarize long documents, pull together quick research, capture meeting notes, or set up basic automations that make everyday tasks easier.

These use cases absolutely help. They save time and reduce small operational friction. The issue is that they usually sit at the edges of the business rather than at its core.

When AI is limited to surface-level productivity tasks, it rarely touches the workflows that actually influence revenue, retention, product development, or customer experience. As a result, the gains remain incremental. Real impact only starts to show up when AI becomes part of the systems that drive how the business actually operates.

2) People adopt AI in stages, not all at once

Employees rarely jump from “I tried it once” to “AI is now part of how I run my role.”

They move through stages as trust builds and the environment supports them. Here is a simple version of that journey you can actually observe inside the teams:

Stage 1: Information help

At first, AI is treated like a smarter search bar - quick answers, quick drafts, quick explanations.

Stage 2: Task help

Then, AI helps with defined tasks, for instance, rewriting a paragraph, creating a basic outline, or summarizing a call.

Stage 3: Delegation

Real adoption shows up when employees hand off actual work to AI: drafting email sequences, building first-pass reports, and turning notes into specs.

Stage 4: Collaboration inside a workflow

AI supports a workflow end-to-end with human oversight. It plans steps, executes parts, asks for missing inputs, and improves across iterations.

Impact accelerates around Stage 4 because work gets redesigned rather than sped up.

3) A single rollout strategy misses the human reality

Adoption varies massively by person. Some employees are curious and fast. Some are cautious. Some are busy and overwhelmed. Some need structure before they will touch a new tool.

When companies treat adoption like one training session and a blanket announcement, progress becomes uneven and fragile.

Four Moves That Increase AI Impact Without Forcing Chaos

You can push AI adoption forward without turning the organization upside down. These four moves work because they respect how people actually change habits.

1) Pick a few core workflows and go deep

Do not spread effort across twenty use cases. Pick two or three workflows per function where impact is obvious.

Examples:

- Customer support ticket resolution

- Sales follow-up and CRM hygiene

- Marketing campaign build cycle

- Finance variance analysis

- Recruiting coordination and screening

- Internal knowledge retrieval for customer-facing teams

Depth builds repeatability. Repeatability builds confidence. Confidence creates adoption.

2) Make managers the adoption multipliers

Employees take cues from their manager’s behavior more than from leadership memos.

If managers model usage, encourage learning, and protect time for experimentation, adoption sticks.

If managers are skeptical or silent, employees learn that AI is optional and risky.

A simple manager plays:

- One weekly team share of “how I used AI this week.”

- One approved prompt template per role

- One shared rule for validation and review

In some teams, managers also pair these practices with a virtual assistant to handle repeatable follow-ups, documentation, and workflow hygiene.

3) Create space for learning during work hours

People rarely build new habits when they are overloaded.

Adoption often stalls for a boring reason: employees cannot spare the time to learn how to use AI well. They stick to familiar routines because that feels safe and fast.

You do not need a giant program. You need a protected space:

- 30 minutes twice a week for four weeks

- role-specific tasks only

- share outputs and learn together

4) Put guardrails where trust breaks

Trust fails when AI outputs are wrong, and nobody knows how to catch it. So define basic rules early:

- Which data sources are allowed

- What cannot be pasted into tools

- What requires human validation

- What gets logged and reviewed

Guardrails are not a blocker. They are what make scaling possible.

How to Measure AI Adoption Without Fooling Yourself

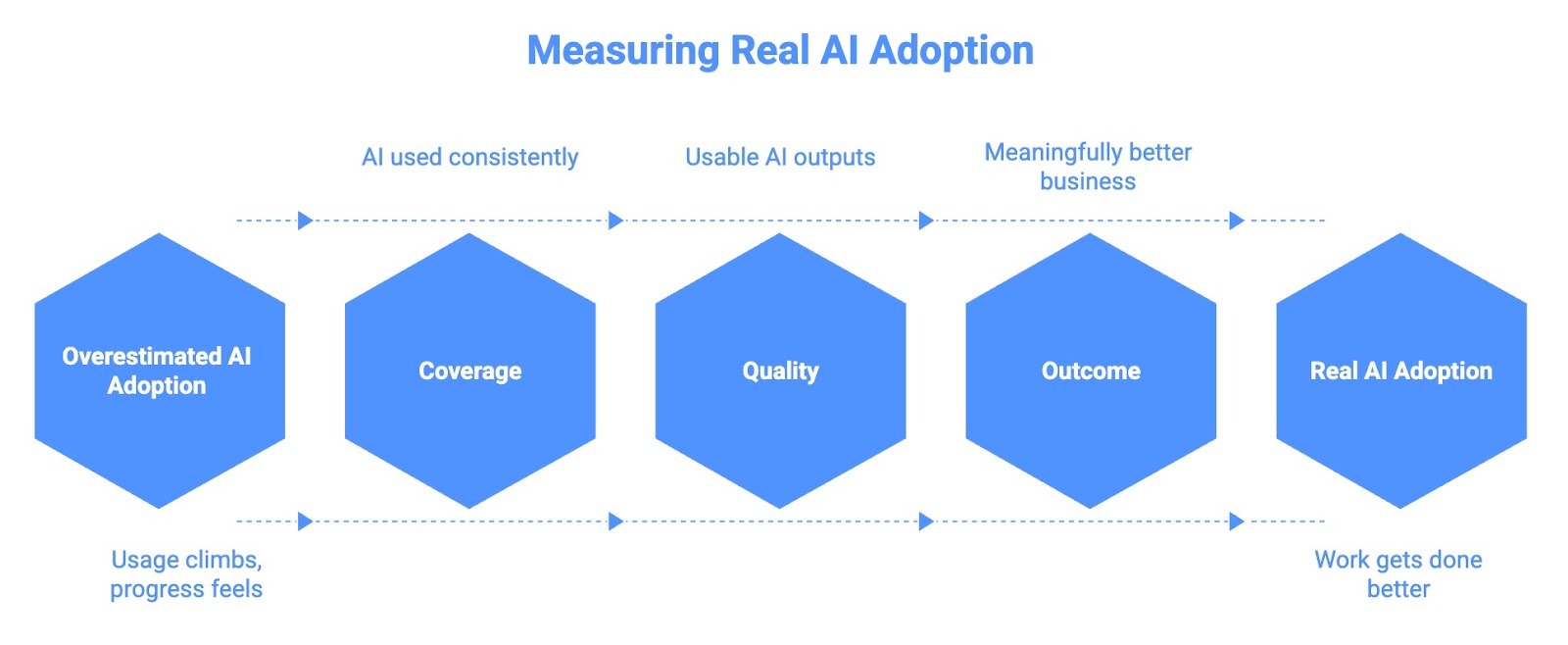

AI adoption is easy to overestimate. Dashboards light up, usage climbs, and people start talking about prompts. It looks like progress. But usage mostly tells you that people are curious, not that AI is meaningfully changing how work gets done.

There is no point in only tracking usage. To understand real adoption, you need to look beyond surface-level engagement and focus on the following three deeper signals:

1) Coverage: Where AI Is Actually Being Used

Coverage answers a simple question: which workflows are using AI consistently, and which ones are not.

This is different from counting how many people have tried a tool. A workflow has adoption when AI is part of the standard way work gets done, not an optional extra.

Good coverage questions sound like:

- Is AI used every time this workflow runs, or only when someone remembers

- Is usage consistent across the team, or dependent on a few power users

- Do new hires learn the AI-enabled version of the workflow by default

If AI use disappears when one person is out sick, coverage is low. Real adoption shows up when work continues the same way regardless of who is running it.

2) Quality: Whether AI Outputs Hold Up

Quality is where many AI efforts quietly fail.

Early on, teams often accept rough outputs because speed feels exciting. Over time, frustration builds if rework stays high or errors repeat.

Quality metrics focus on how usable AI outputs actually are:

- How often outputs pass review without heavy edits

- Where human intervention is required and why

- Which failure modes keep showing up in the same steps

When quality improves, trust grows. When trust grows, adoption deepens. This is also where guardrails start paying off. Clear inputs, defined validation steps, and shared standards reduce rework and make AI feel reliable rather than risky.

3) Outcome: What Actually Changed

Outcomes are the hardest to measure and the most important.

Outcomes look beyond the AI tool and back to the business problem the workflow exists to solve. They answer whether AI made things meaningfully better.

Common outcome signals include:

- Faster cycle times or fewer handoffs

- Lower error rates or fewer escalations

- Higher throughput without burnout

- Better customer satisfaction or response quality

- Measurable influence on revenue or cost to serve

Not every workflow ties directly to revenue, and that is fine. The goal is to pick outcomes that matter to the function and track them consistently over time.

Do you Have the Bandwidth to Boost AI Adoption?

AI adoption is no longer limited by access to tools. Most teams already have more capability at their fingertips than they know how to use. What holds organizations back now is execution. Embedding AI into real workflows, maintaining quality, and turning early wins into repeatable outcomes all require consistent operational support.

This is where many teams feel the strain. Leaders want AI to move faster, but internal teams are already stretched. Managers juggle adoption alongside existing responsibilities. Important work like process maintenance, review, and follow-through becomes fragmented, and momentum fades.

Strong operators make the difference here. People who can run processes, support AI-enabled workflows, and keep systems working day after day create the conditions where adoption actually sticks. Without that layer, even well-designed AI initiatives struggle to scale.

At Pearl Talent, we work with companies at this exact point. We help teams add high-caliber global operators who integrate into existing workflows and support AI-driven operations without creating overhead. You can get your next hire in under two weeks at a fraction of the cost, without compromising on quality.

Frequently Asked Questions

.svg)