Catena is now Pearl Talent! Same mission, new name.

How to Use AI in Hiring to Find Better Candidates Faster

AI is already being used to write emails, ship products faster, and support medical diagnosis. In many industries, AI has moved from experimentation to everyday use. Hiring is now integrating AI into its own processes as well.

Recruiting teams are applying AI to resume screening, candidate matching, outreach, and interview notes because these steps repeat across every open role and break first when volume increases. According to a report by Boston Consulting Group, 70 percent of companies experimenting with AI or GenAI are doing so within HR, with talent acquisition as the most common use case.

The interest isn’t theoretical. Teams want to know where AI actually fits into hiring workflows, what it can reliably handle, and where it creates risk if left unchecked.

This guide explains how to use AI in hiring in a practical way, step by step, focusing on real hiring tasks rather than abstract capabilities.

10 Practical Ways to Use AI in Hiring

AI becomes useful in hiring when it is attached to a specific step in the workflow. The ideas below focus on concrete hiring tasks teams already struggle with and show where AI can meaningfully reduce friction or improve consistency.

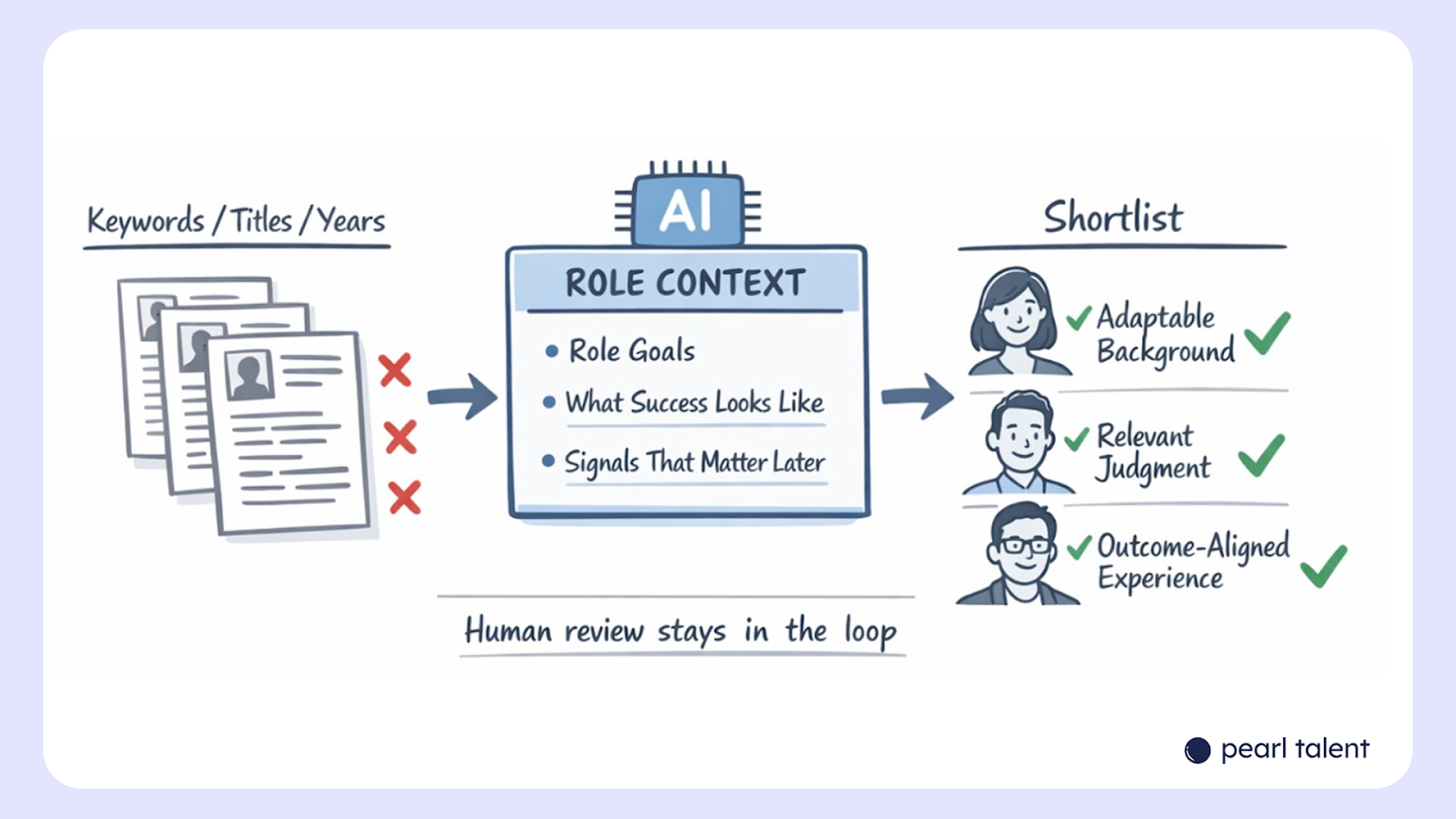

1. Resume triage based on role context, not keywords

Most resume screening fails because it relies on surface-level matches. AI can be trained to scan resumes against the actual role context instead of static keywords. This means feeding the model information about the role’s goals, common career paths that succeed in it, and signals that matter later in interviews.

Used well, this approach surfaces candidates who may not look perfect on paper but align strongly with outcomes.

For example, a candidate who moved laterally across functions may rank higher than one with a linear title history if the role values adaptability. Recruiters still review the shortlist, but the initial cut becomes faster and more consistent. Over time, teams can refine prompts based on which AI-ranked candidates convert to interviews or offers, tightening the signal loop.

2. Job description cleanup before sourcing begins

Many hiring problems start with unclear job descriptions. AI can help rewrite JDs by stripping vague language, aligning responsibilities with real expectations, and flagging requirements that screen out capable candidates unnecessarily.

This works best when the recruiter, recruitment agency, or hiring manager inputs past performance data or notes from previous hires. The model can highlight gaps, such as inflated experience requirements or responsibilities that don’t match the level. Instead of publishing a generic JD, teams use AI to pressure-test clarity before sourcing even starts. Better inputs lead to better applicants, reducing downstream screening effort and misalignment during interviews.

3. Smarter sourcing across fragmented profiles

AI can aggregate signals from resumes, LinkedIn profiles, portfolios, and application answers to build a more complete candidate picture. Instead of reviewing each source separately, recruiters get a consolidated summary that highlights strengths, overlaps, and potential concerns.

This is especially helpful for roles where experience shows up unevenly across platforms. Someone may undersell themselves on a resume but demonstrate depth through projects or written responses. AI helps normalize that information so candidates are evaluated more evenly. Recruiters still decide who moves forward, but they spend less time assembling context and more time assessing fit.

4. Candidate ranking that adapts mid-search

Early hiring assumptions are often wrong. AI models can be adjusted mid-search based on feedback from interviews already conducted. If early hires reveal new success signals, the ranking logic can be updated without restarting the pipeline.

For example, if interviewers consistently value problem framing over domain familiarity, AI can reprioritize candidates showing that signal. This keeps the funnel aligned with real-world learnings instead of locked-in assumptions. It also prevents teams from rejecting strong candidates early because the initial criteria were off.

5. AI-assisted outreach personalization at scale

Generic outreach reduces response rates and damages the employer brand. AI can generate personalized outreach that references specific experience, career transitions, or projects without recruiters writing each message manually.

This works when guardrails are clear. Recruiters define tone, length, and what should never be assumed. AI handles the repetitive drafting while humans review samples for quality. The result is higher response rates with less manual effort, especially in hard-to-fill roles where outbound sourcing matters.

6. Interview question generation tied to role risks

AI can help generate interview questions based on known failure points in a role. Instead of generic behavioral questions, teams input scenarios where past hires struggled.

The model produces targeted prompts that test judgment, trade-offs, and decision-making. Interviewers still choose which questions to ask, but preparation improves. This leads to interviews that surface useful signals earlier, reducing the number of rounds needed to reach a decision.

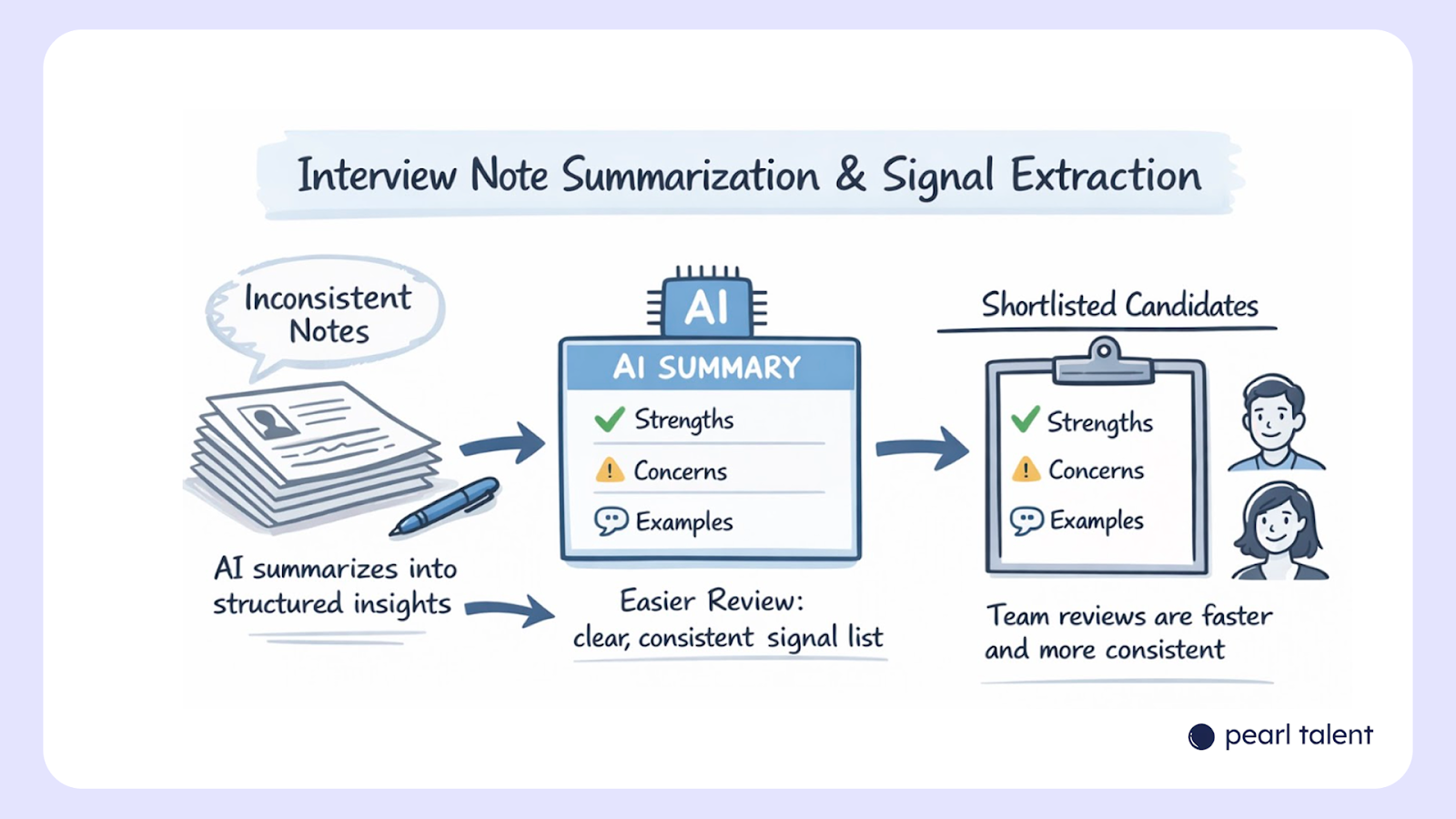

7. Interview note summarization and signal extraction

Interview notes are often inconsistent and hard to compare across candidates. AI can summarize notes into structured insights such as strengths, concerns, and examples cited.

This helps hiring managers review candidates faster and reduces reliance on memory or subjective impressions. The summary acts as a decision aid, not a replacement. Over time, teams can standardize what signals they want extracted, improving consistency across interviewers.

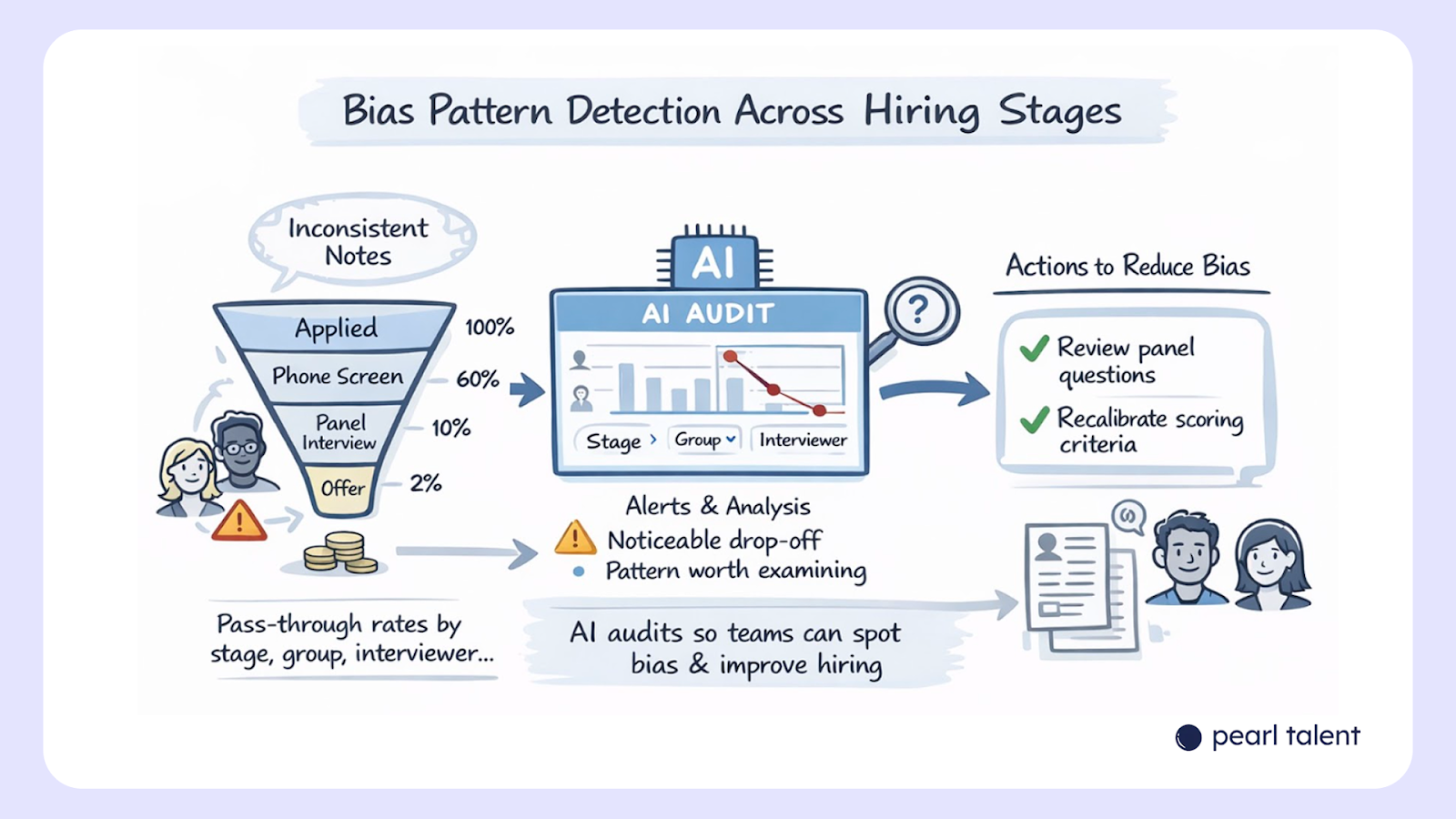

8. Bias pattern detection across hiring stages

AI can be used to audit hiring outcomes, not to make decisions. By analyzing pass-through rates by stage, role, or interviewer, models can surface patterns teams may miss.

For example, if candidates from certain backgrounds consistently drop at the same stage, that’s a signal worth examining. This use of AI supports accountability rather than automation. It helps teams ask better questions about their process without introducing new decision-making risks.

9. Offer benchmarking and consistency checks

AI can compare offers against internal bands, market data, and past offers for similar roles. This reduces errors, delays, and inconsistencies that frustrate candidates late in the process.

Recruiters input role details, seniority, and location assumptions. AI flags outliers or missing elements before the offer goes out. The final decision stays human, but the preparation becomes faster and cleaner.

10. Hiring process retrospectives after roles close

After a role closes, AI can analyze the full funnel: time-to-fill, drop-off points, interview feedback, and offer acceptance. Instead of a manual retrospective that rarely happens, teams get a structured summary.

This helps refine future searches. Patterns like repeated interview bottlenecks or unclear role expectations become visible. Over time, this feedback loop improves hiring quality without adding overhead to recruiters’ day-to-day work.

Can We Trust AI to be Fair in Hiring?

AI can support fairer hiring decisions, but only under strict conditions. Left on its own, it will reflect the data, assumptions, and incentives it is given. That means any bias already present in past hiring decisions, job descriptions, or evaluation criteria can quietly carry forward.

For AI to improve fairness rather than undermine it, two safeguards matter most:

1. Data privacy: Hiring data often includes sensitive personal information, even when it isn’t explicitly labeled as such. Resume content, career gaps, education history, or location can act as proxies for protected attributes. Teams need to be clear about what data is being fed into AI systems, how long it is stored, and who has access to it. Using AI tools without this clarity creates legal and ethical risk.

2. Human oversight: AI should not make final hiring decisions, rank candidates without review, or reject applicants automatically. Its role is to assist with analysis, pattern recognition, and summarization. Humans remain responsible for judgment, context, and accountability. If a decision cannot be explained without saying “the system decided,” that’s a warning sign.

Used this way, AI becomes a support tool rather than a replacement. It helps teams spot signals, reduce manual load, and apply criteria more consistently, while people retain control over outcomes and fairness.

AI Improves Hiring Only If the Talent Pool Is Strong

AI can improve how you screen, rank, and coordinate candidates. It can shorten cycles and remove friction. What it cannot do is fix weak sourcing or turn an average candidate pool into a strong one. That’s where most hiring efforts quietly break. Teams invest in tools, prompts, and automation, but still start from the same narrow job boards and overexposed profiles.

This is the gap Pearl Talent focuses on closing. It can give you:

- Access to operators from prestigious local companies and top universities

- Talent from the Philippines, Latin America, and South Africa

- Full-time, long-term hires who work in your time zone

- Teams scaling at up to 60% lower payroll, without sacrificing quality or retention

Pearl Talent hires are not placed and forgotten. They continue learning after they join your team. AI tools are part of that training, so they know how to use them in practical ways, not just experiment with them.

Browse available hires or get hand-picked profiles from Pearl Talent sent to your inbox within one business day.

Frequently Asked Questions

.svg)