Catena is now Pearl Talent! Same mission, new name.

What is AI Literacy and How to Build It in Your Organization

Artificial intelligence is no longer experimental. It is embedded in search, productivity tools, customer support systems, marketing platforms, and internal workflows across industries.

Governments, universities, and companies alike are now treating AI literacy as a core skill rather than a niche technical specialty. The reason is simple. AI is reshaping how people work, make decisions, and collaborate.

Using AI tools occasionally is not the same as being literate in them.

Clicking a button, generating text, or automating a task does not build the judgment needed to interpret outputs, manage risk, or adapt workflows as AI systems evolve. Without that understanding, organizations may adopt AI broadly while still seeing inconsistent outcomes, misuse, or over-reliance on tools they do not fully grasp.

This is why AI literacy matters. It sits at the intersection of education, workforce readiness, and daily execution. It applies not only to students and educators, but to operators, managers, and frontline teams already working in AI-assisted environments.

What is AI Literacy?

AI literacy is not simply using AI tools or having access to them. It is not copying outputs from a chatbot, automating a task, or adding AI software to existing workflows and assuming understanding will follow.

But it also doesn't mean becoming a data scientist, or analyst, or learning to code models. It does not require technical depth for every role.

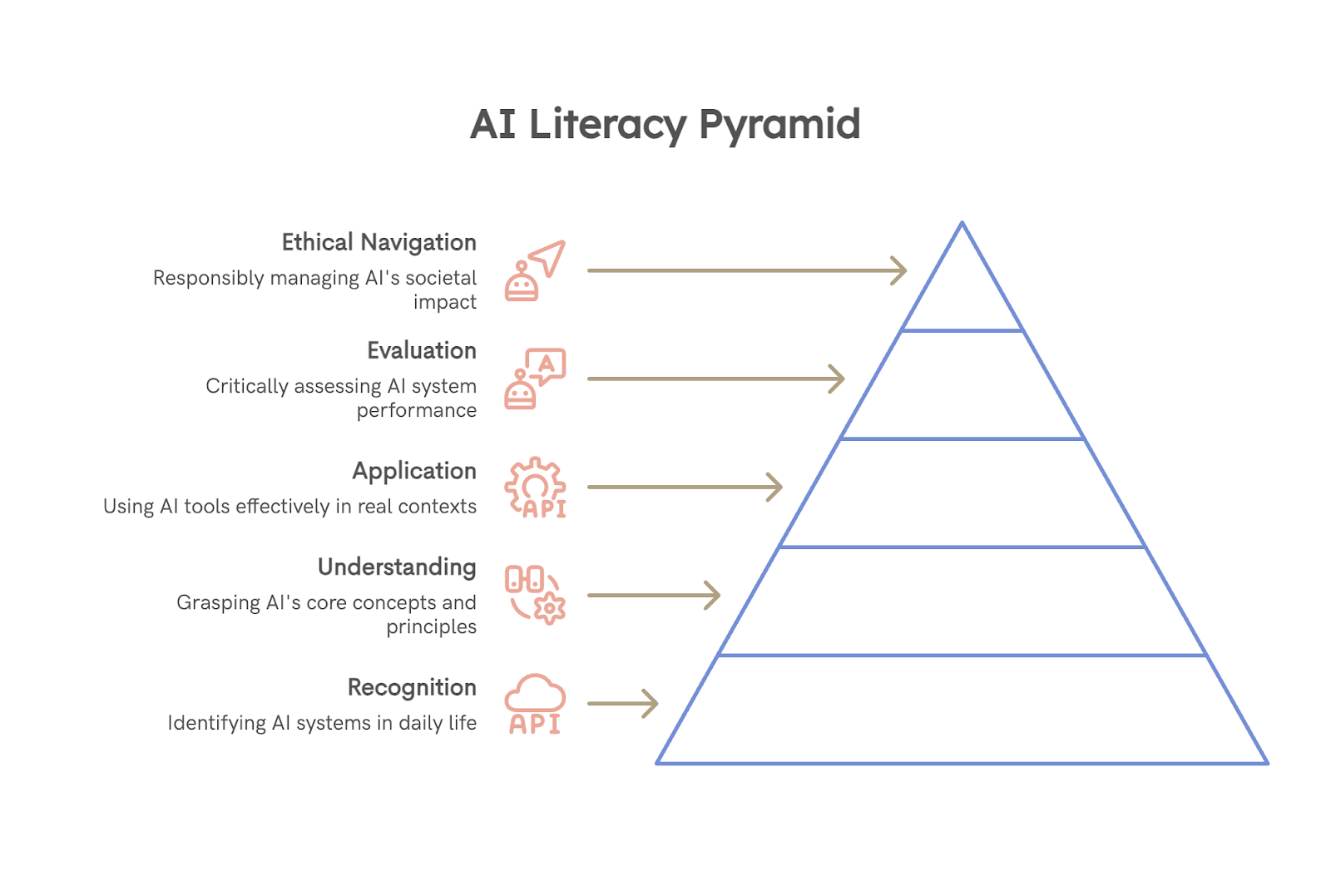

AI literacy is a multi-dimensional capability, not a single skill. Being AI-literate means being able to recognize, understand, apply, evaluate, and ethically navigate AI systems in real contexts so they can be used productively and responsibly.

Consider two operations teams using the same AI forecasting tool.

The first team relies on the model’s output without questioning it. When forecasts are off, they assume the AI is “wrong” or “hallucinating” but don’t know why. They lack visibility into data quality, assumptions, or confidence levels, so errors surface only after decisions have already been made.

The second team is AI-literate. They understand what data feeds the model, where it performs well, and where it struggles. They review outputs critically, adjust inputs when conditions change, and override the system when signals don’t match reality. They also flag recurring issues so the system can be improved.

Both teams use AI. Only one can work with it competently.

The Building Blocks of AI Literacy

Using AI is only one small part of the picture. These six dimensions explain what it takes to work with AI thoughtfully and responsibly.

1. Recognize

Before anyone can work with AI well, they need to notice when it’s actually involved.

This sounds obvious, but research shows many people interact with AI-driven systems without realizing it. Recommendation engines, risk scores, content filters, and ranking systems often fade into the background.

Being able to recognize AI means:

- Knowing when a system is learning from data rather than following fixed rules

- Understanding that “smart” features are not neutral defaults

- Realizing when decisions are being shaped by models rather than people

Without this awareness, everything else breaks down. You can’t question or evaluate something you don’t realize is there.

2. Know and understand

AI literacy does not require technical depth, but it does require mental models. People need to understand that AI systems learn patterns from past data, that humans define objectives and constraints, and that outputs reflect probabilities rather than truth. This understanding helps reset expectations.

Instead of treating AI as intelligent or objective, people see it as a system shaped by data quality, design choices, and context. Research consistently shows that this kind of conceptual grounding reduces over-trust and improves decision-making.

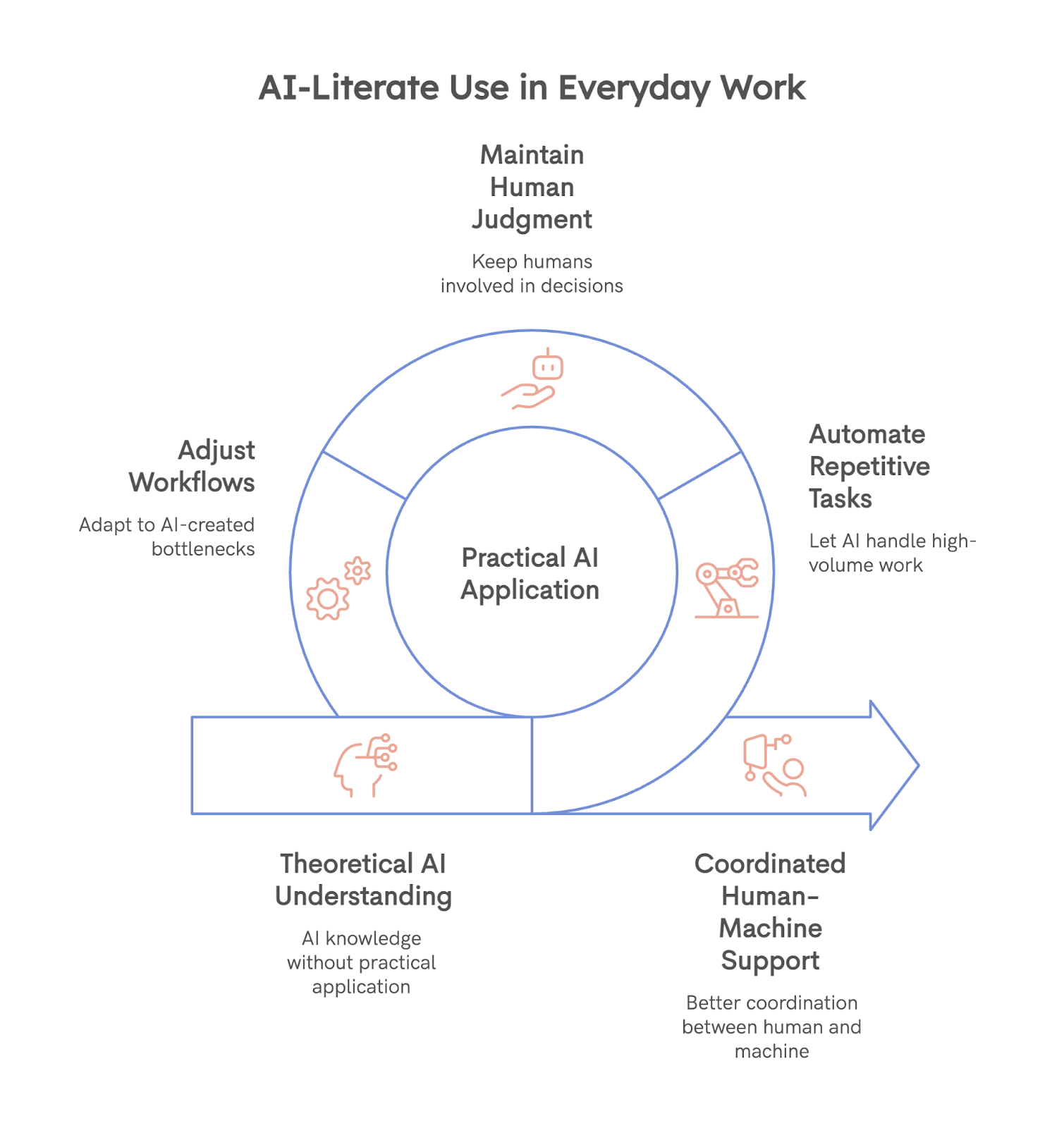

3. Use and apply

Using AI well shows up in everyday work, not in theory.

AI-literate use usually looks like small, practical choices:

- Letting AI handle repetitive or high-volume tasks

- Keeping humans involved where judgment or accountability matters

- Adjusting workflows when AI creates new bottlenecks or risks

People learn AI best when they apply it to real tasks, and not just by reading about it. So the goal is not maximum automation, but it is better coordination between human work and machine support.

4. Evaluate

This is where AI literacy becomes visible.

Evaluation means slowing down when AI outputs look confident and asking whether they make sense in context. It includes checking for bias, understanding when data is outdated, and noticing patterns of error over time.

People who lack the skill of evaluation tend to accept outputs as fact. People who have it treat AI as a suggestion, not a verdict.

5. Create

Creation is not about turning everyone into a developer.

This often means shaping how AI is used rather than building it. That might involve:

- designing prompts or decision rules

- defining when AI is allowed to act independently

- improving systems by giving structured feedback

But for teams implementing AI at scale, the ability to shape and adapt systems over time matters more than initial setup.

6. Navigate ethically

Ethical navigation is less about rules and more about awareness.

AI-literate people understand that AI systems affect people differently. They think about privacy, fairness, accountability, and who bears the cost when things go wrong. This does not mean having perfect answers.

It means recognizing trade-offs and making them explicit. Ethical awareness builds trust and reduces harm, especially in areas like hiring, education, healthcare, and customer decisions where AI outputs can materially affect lives.

How to Build AI Literacy in Your Organization

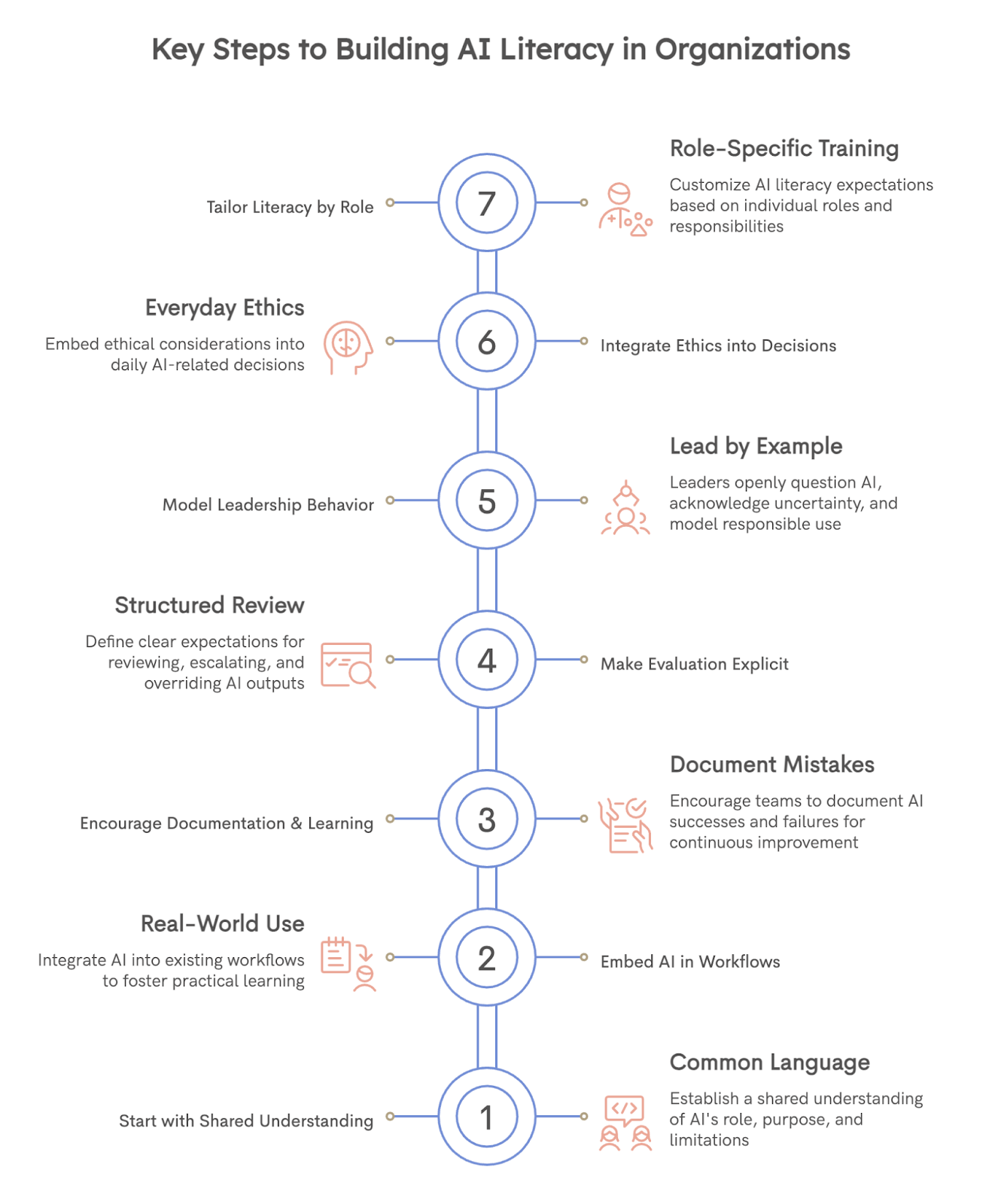

Building AI literacy is not a single training session or a rollout plan. It is an ongoing process that develops through how people learn, work, and make decisions alongside AI. Organizations that approach it as a one-time enablement effort often see surface-level adoption but little real change in outcomes.

The first step is to start with shared understanding, not tools. Teams need a common language for what AI is doing in their context. This includes where AI is being used, what problems it is meant to solve, and what its limitations are. Short internal explainers, examples from your own workflows, and open discussions about failures help create this baseline far more effectively than generic AI training.

From there, literacy grows through use in real work, not abstract learning. People understand AI best when they see how it behaves in the tasks they already do.

Some ways organizations support this:

- Embed AI into existing workflows rather than creating separate “AI projects.”

- Encourage teams to document when AI helps and when it breaks

- Treat mistakes as learning signals rather than usage errors

Evaluation needs to be made explicit. Many organizations assume people will naturally question AI outputs, but research shows this rarely happens without structure. Create clear expectations around review, escalation, and override.

For example:

- Define when AI outputs must be checked by a human

- Make it acceptable to challenge AI-driven recommendations

- Track patterns of error instead of isolated incidents

Leadership behavior matters more than policy. When leaders openly question AI outputs, acknowledge uncertainty, and model responsible use, teams follow. When leaders treat AI as infallible or purely efficiency-driven, literacy stalls.

Ethics should not live in a separate document. It needs to show up in everyday decisions. This can be as simple as asking a few consistent questions during rollout and review:

- Who is affected by this output?

- What happens when it’s wrong?

- Who is accountable for the decision?

Recognize that AI literacy is role-dependent. Not everyone needs the same depth. Frontline teams need confidence in use and evaluation. Managers need judgment and accountability. Technical teams need creation and system-level understanding. Tailoring expectations by role prevents overload and keeps literacy grounded in actual work.

Building AI-Literate Teams With Pearl Talent

AI literacy does not develop in isolation. It grows when people stay close to the work, see systems evolve, and take responsibility for improving how AI is used over time. That kind of learning is hard to achieve with short-term freelancers or rotating vendors. It requires stable, motivated team members who are invested in outcomes.

This is where Pearl Talent can help.

We help companies hire full-time, long-term remote talent who can grow alongside AI-driven workflows, not just execute tasks around them. You get:

- Vetted talent from top universities and reputable companies, not open job boards

- Up to 60% lower payroll costs, without compromising capability or retention

- High match quality, with a track record of successful long-term placements

- Timezone-aligned, embedded team members who work as part of your organization

Because these hires are fully integrated into your operations, they develop AI literacy in context. They learn where tools help, where they fail, and how workflows need to adapt. Over time, they become owners of systems rather than passive users.

AI literacy ultimately shows up in execution. Having the right people in place makes the difference between AI that adds value and AI that needs constant supervision. Browse available hires and find talent ready to grow with your company.

Frequently Asked Questions

.svg)